Webscraper pagination12/30/2023

I've found a number of resources that are likely helpful, but my M isn't currently good enough to understand how to generalise the approaches. #"Renamed Columns" = Table.RenameColumns(#"Converted to Table",) #"Converted to Table" = Table.FromList(Source, Splitter.SplitByNothing(), null, null, ExtraValues.Error),

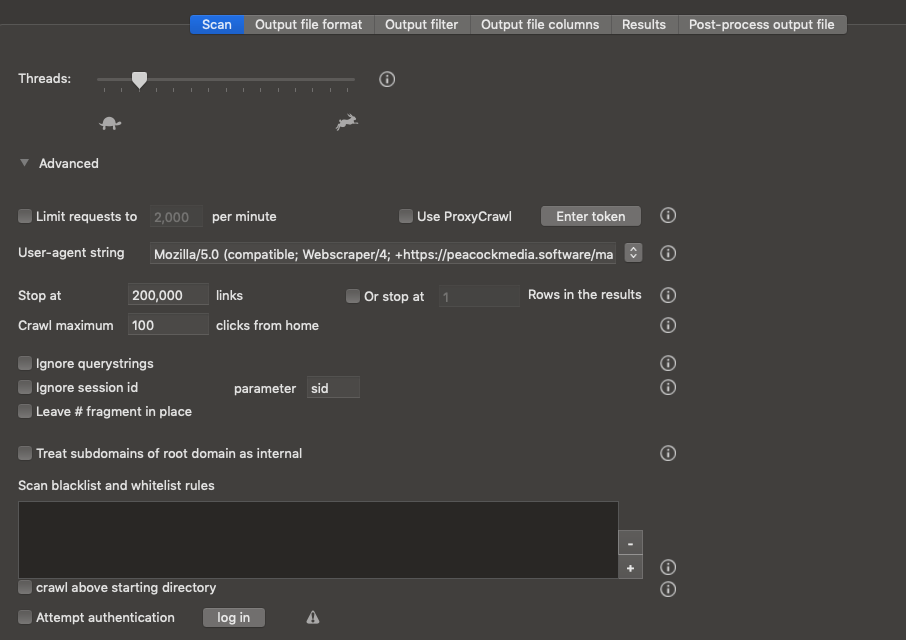

Is there any way I can restructure the below wrapper code, so that I can say call the function with page parameters from 1 to 100, and just simply ignore any errors that are generated when I exceed the actual amount of pages currently available? let I'd like to be able to feed the function an oversized number of pages, so that I automatically capture any growth in pages going forward. Only problem is, Matt's approach hard-codes the number of pages that actually exists, and if you feed it a bigger number to handle the possiblity of growth, then you get an error. The general approach I'm taking is from the this post at the datachant blog blog, with multilpe pages of results being obtained as per this post at Matt Masson's blog. I've got a PowerQuery that pulls data from a paginated URL back into Excel for analysis.

I appreciate any help you can provide or resource you can point me to.Howdy folks. I’ve spent hours on Youtube and trying to work through the syntax to save the time required to manually look up each record that doesn’t come through. Is there a way you think of doing this to simplify the syntax versus the squirrelly way Googlers think about it and thus explain it in the examples that are available online? I can’t find an example that shows this use case: where the specific web page is dynamic based on the 5-digit value in column AB. I thought importxml should work but as you can see, I get nonsense.

As many as 10% of the lookups return no match. Column AB however, accesses the table in sheet 2 “Master 5-Digit…” which includes 33000+ zip codes but actually excludes quite a few. Column C, the assigning state is easy – populates 100% of the time. My file is a publicly available NARA (National Archives) file download formatted and expanded with formulas, etc.Ī couple “index/match” formulas in column C & column AB lookup the state that assigned each SSN and the city state corresponding to the person’s zip code at the time of death. The xpath-query, looks for span elements with a class name “byline-author”, and then returns the value of that element, which is the name of our author.Ĭopy this formula into the cell B1, next to our URL: We’re going to use the IMPORTXML function in Google Sheets, with a second argument (called “xpath-query”) that accesses the specific HTML element above. In the new developer console window, there is one line of HTML code that we’re interested in, and it’s the highlighted one: This brings up the developer inspection window where we can inspect the HTML element for the byline: New York Times element in developer console Hover over the author’s byline and right-click to bring up the menu and click "Inspect Element" as shown in the following screenshot: New York Times inspect element selection But first we need to see how the New York Times labels the author on the webpage, so we can then create a formula to use going forward. Note – I know what you’re thinking, wasn’t this supposed to be automated?!? Yes, and it is. Navigate to the website, in this example the New York Times: New York Times screenshot Let’s take a random New York Times article and copy the URL into our spreadsheet, in cell A1: Example New York Times URL Grab the solution file for this tutorial:įor the purposes of this post, I’m going to demonstrate the technique using posts from the New York Times.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed